First and foremost, it must be stated that GSES is very optimistic about the capability of new energy storage technology. GSES is confident that those that are involved in transition of the electricity grid are moving quickly to make new technology better, cheaper, safer and more secure. That said, a topic which has not been discussed in any great detail is the cyber security of Battery Management Systems (BMS) which play a critical role in most new energy storage technology.

The principal role of the BMS is to facilitate the safe charging and discharging of lithium ion batteries. There are many different types of BMS and various topologies used however the aim is the same: to keep each cell within tolerance while cycling the battery according to the needs of the user. Keeping each cell within tolerance not only ensures battery longevity but also plays a critical role in the battery safety.

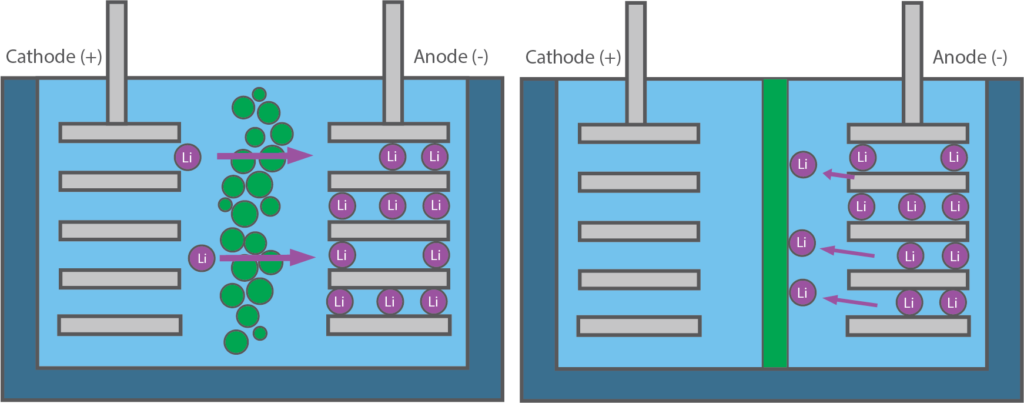

The BMS should always monitor cell voltage. If a cell is over voltage, the anodes ability to accept charge is reduced. As this happens, it is possible for lithium metal to plate onto the anode, reducing the charge capacity of the battery. If charging continues, it is possible to form lithium dendrites which grow away from the anode, cross the separator and create an internal short circuit (bad). In addition, over voltage tolerance can lead to an exothermic reaction between the cathode and the electrolyte. This reaction can lead to thermal runaway and also generate carbon dioxide gas. As gas is created it can raise the internal pressure of the cell and cause it to fail mechanically (also bad). If a cell is under voltage, some of the conductive metal (typically copper, if present) may shed from the anode causing a reduction in charge capacity. The metal may then dissolve in the electrolyte which later precipitates out upon cell charging. If this happens enough, a short circuit pathway could form between the anode and cathode (not good). Additionally, if a cell is under voltage oxygen can be released from the lithium metal oxide cathode, degrading the cell performance over many cycles. However, if enough oxygen is created, the internal pressure of the cell will increase, potentially causing the cell to fail mechanically (also not good).

The BMS should also always monitor cell temperature. If a cell is under temperature the chemical reactions in a lithium ion battery slow down. Generally, charging will not be allowed below 0 degrees (though some manufacturers are working on reduced temperature charging). If charging is allowed at low temperature, again it can cause lithium metal plating on the anode. If a cell is over temperature to the point of internal cell composition breakdown (breakdown temperature varies, but generally about 110 degrees Celsius), the lithium can react directly with the electrolyte causing thermal runaway.

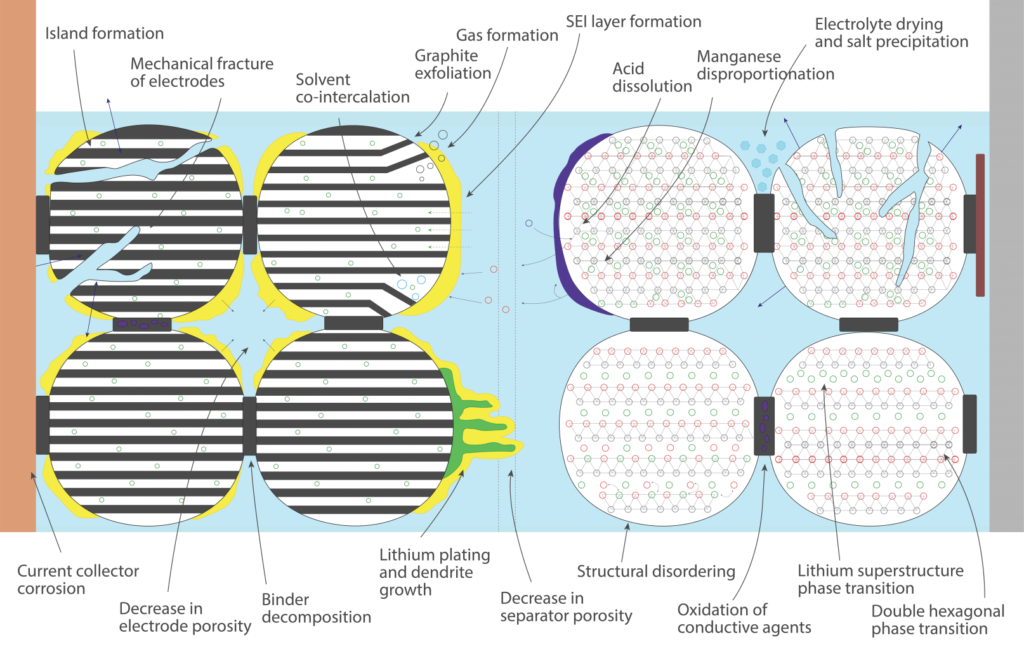

There are also a number of other lithium ion degradation methods caused by thermal stress, mechanical stress, rate or number of intercalations, charge levels, etc. The degradation types are shown in the figure below.

Figure 1: Degradation mechanisms. (Source: Yu Merla – Imperial College of London)

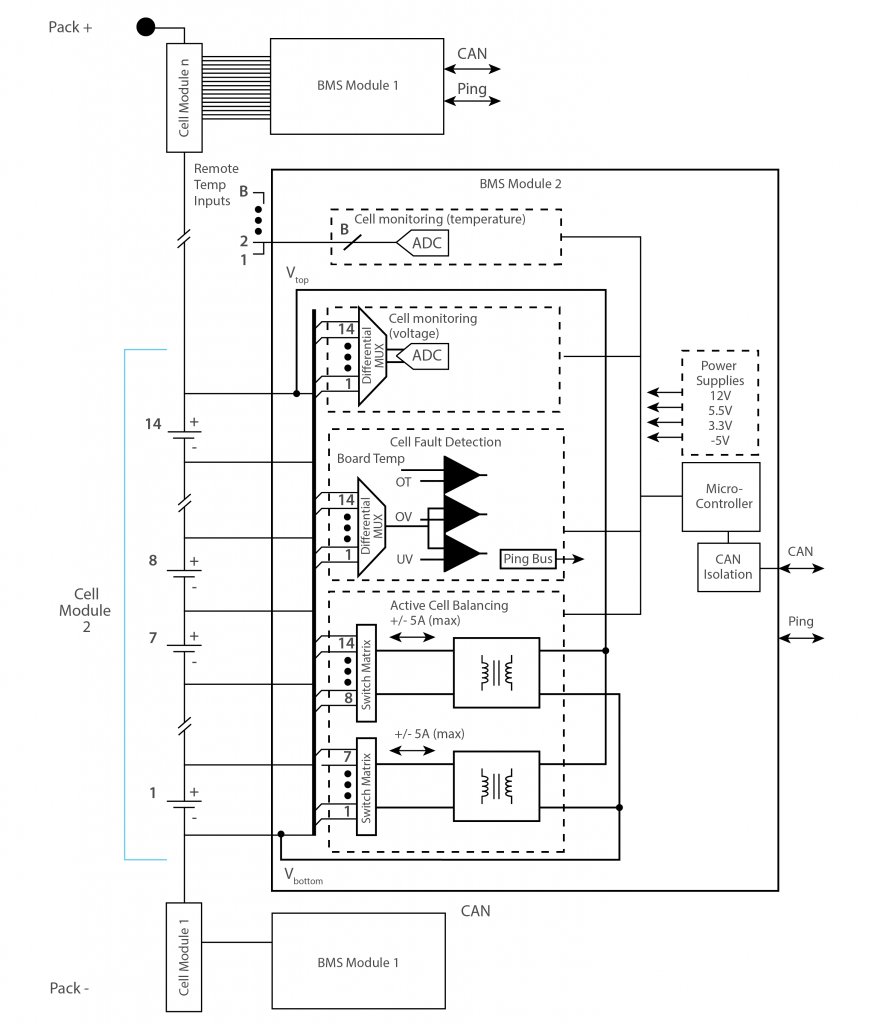

A BMS should, at a minimum monitor the voltage and temperature of each cell. The BMS will then use either active or passive charge methodology to charge, discharge or isolate each cell as appropriate. An active BMS uses integrated circuits and MOSFETS (metal-oxide-semiconductor field effect transistors) to shunt current across fully charged cells. A passive BMS uses dump resistors to dissipate energy from fully charged cells. The figure below is a simple schematic of an active BMS topology from Texas Instruments.

Source: Texas Instruments EM1401EVM

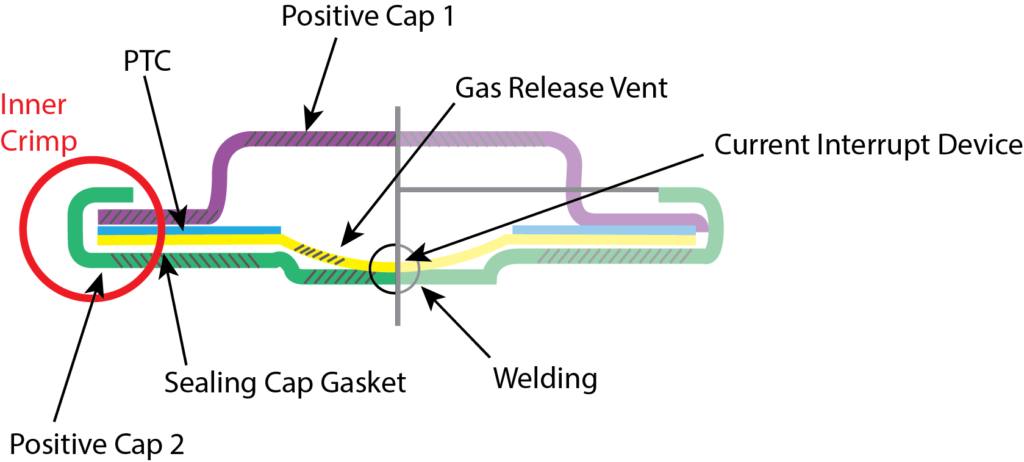

If the BMS were to fail the results can be very dangerous. It is important therefore that the lithium ion cells have some form of mechanical protection as well. Lithium ion cells should include an internal pressure release valve which operates if the internal pressure reaches a dangerous level. This way, though the battery will still fail, it will release its pressure in a safe manner.

Lithium ion cells should also include a separator which is made from material that facilitates thermal shutdown. These separator materials change state, creating a physical barrier, at a temperature just below thermal runaway and prevent the passage of ions.

The BMS is also becoming more sophisticated. At the moment it provides critical safety and operational functionality but it can also provide critical diagnostics and analytics. The data gathered can be used to increase performance and cell longevity, provide better State of Health (SoH) metrics and even forecast throughput and capacity attenuation given variable battery conditions, providing valuable operations and maintenance information. To do this, the parameters in the table below could be monitored for the following use cases:

| Parameter | Use |

| Voltage | • Under/Over Voltage for basic BMS Safety and State of Charge (SoC) • dV/dt for charge / discharge magnitude and duration |

| Temperature | • Under/Over Temperature for basic BMS Safety • dT/dt for extrapolation of internal stress |

| Current | • For Coulomb counting to substantiate SoC • dI/dt for determination of internal resistance |

| Internal pressure | • Monitor for charge retention attenuation and SoH indicator • dP/dt for internal battery fault warning |

Energy storage will increasingly be viewed as critical infrastructure. Nationalsecurity.gov.au defines critical infrastructure as:

“Those physical facilities, supply chains, information technologies and communication networks which, if destroyed, degraded or rendered unavailable for an extended period, would significantly impact the social or economic wellbeing of the nation or affect Australia’s ability to conduct national defence and ensure national security”

Energy storage is just now being deployed to perform critical grid support functionality including demand response and eventually frequency control ancillary services (FCAS). In South Australia for example, in the very near future energy storage may be called upon to mitigate what AEMO calls Lack of Reserve (LOR) events where there isn’t enough supply in the system to ensure that any single event will not cause a major network issue (such as wide spread blackout). As was the case in February, 2017 if additional supply sources cannot be brought online, the way lack of reserve is managed is with an actual load shedding event (what AEMO calls LOR3). The social and economic impacts from load shedding or blackouts are, needless to say, significant.

This is the main concern for cyber security of energy storage systems. As energy storage is increasingly relied upon to provide grid support when the grid is at its most venerable, these systems must be protected from cyber intrusion. This is especially true for shared ownership assets or assets which provide ancillary services but which are owned by end use customers. Energy storage systems which participate in this functionality must be network connected and the control systems can then link to the BMS via a simple PLC protocol such as CANBUS or MODBUS. This kind of system vulnerability is not unique to energy storage and exposures have been exploited in the past (see Ukrainian power grid hacks of 2015 and 2016 https://www.wired.com/story/crash-override-malware/). “Hacking” the BMS has the potential to override/disable the safety and protective functions of the BMS thus creating a hazard or even interfering with the energy storage system’s ability to provide energy when required. As new assets are added to the electricity network, hopefully, information and communication technology (ICT) network security will be scrutinised. If network authorities plan appropriately for the inclusion of energy storage technologies, they have the potential to create an exceedingly robust, reliable and inexpensive electricity system.